Legacy methods started with the process and many AI controls today start with algorithms; but for agentic AI, proper oversight requires a phase shift that must start with the data

The rise of generative AI (GenAI) has been the momentum moving corporate and research expectations, investments, and innovation regardless of industry or discipline. However, in just two short years — and even without extensive scale and maturity of GenAI production systems — new variants and challenges are already alternating deployment designs and operating strategies.

In fact, 92% of companies say they will invest more in Gen AI over the next three years, yet only 1% state that their investments have reached maturity, according to a recent workplace report from McKinsey & Co.

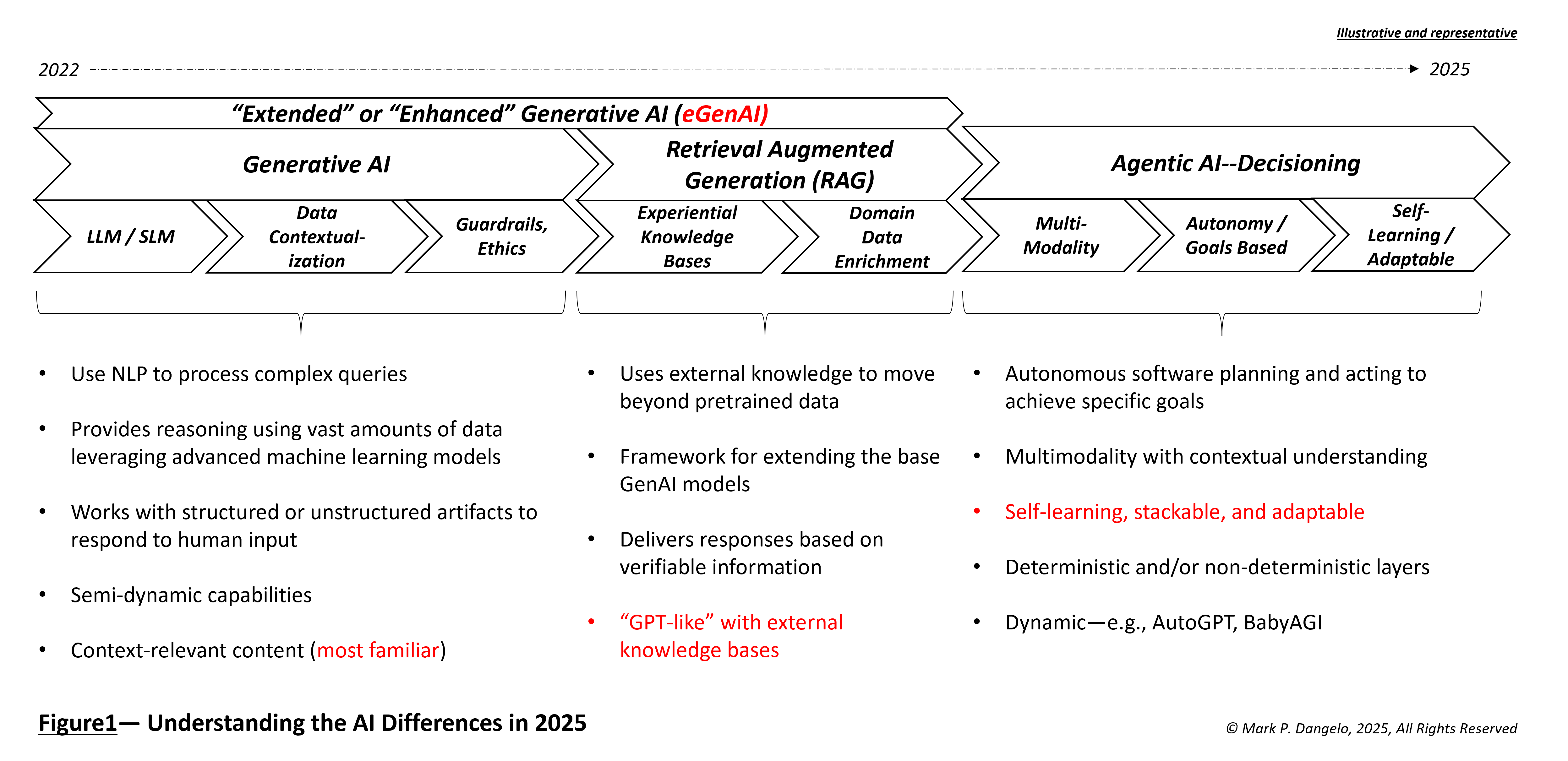

For leaders currently struggling with the terminology and designs of GenAI — protocols, messaging, large and small language models, vector databases, algorithms, and more — a next generational shift is already underway. And it’s already building on top of early directions, while introducing a new set of requirements and governance demands. Will the AI momentum slow down? Will new AI innovations become mere extrapolations of early-stage data and intelligence advances? Or will something more profound happen?

Indeed, this pace of AI change is dwarfing anything previously experienced. However, what struck me is the question: How do you instill robust oversight for solutions which are temporal, self-learning, and adaptative based on the data they ingest? Everyone has their own understanding of what AI is from the daily blast of media articles, so baselining is necessary.

In 2023, the term pre-training took on new importance as ChatGPT permanently changed the discussion of systems and data — in addition to costs, cloud architectures, and skills needed. By mid-2024, enterprises were witnessing the rise of retrieval augmented generation (RAG) using external data to improve the accuracy of GenAI and their industry’s large language models. Now as 2025 emerges, corporate leaders are being blanketed with yet another evolution or iteration of GenAI — agentic AI.

To understand the progression of the question, What is AI? you need to compare the ideas of accountability and design between legacy priorities and ask the emerging questions surrounding accountability of data. It is this data that will simultaneously feed hundreds of AI layered components, and not just the one or two which today are simplistically being anticipated.

Legacy data brings next-gen complexity

Underneath these marvels of AI algorithms and chip technologies, the demand for usable data to improve the accuracy, longevity, and auditability of capabilities continues to strain internal departments and compliance personnel. However, as AI systems explode in their usage and deployment, the vast questions surrounding data complexity — its lineage, ingestion, storage, manipulation, and cross-domain usage — are often a black box.

As 2025 unfolds with macroeconomic and political uncertainties, what is certain is that given AI’s expansive trajectory, data can no longer be isolated or reviewed at a system level. When AI systems are pre-trained on separate data ecosystems, when AI systems begin to feed their outputs to downstream systems, and when AI results are materially different from common control criteria over time, then how will these systems be re-trained on event-driven data and at what cost?

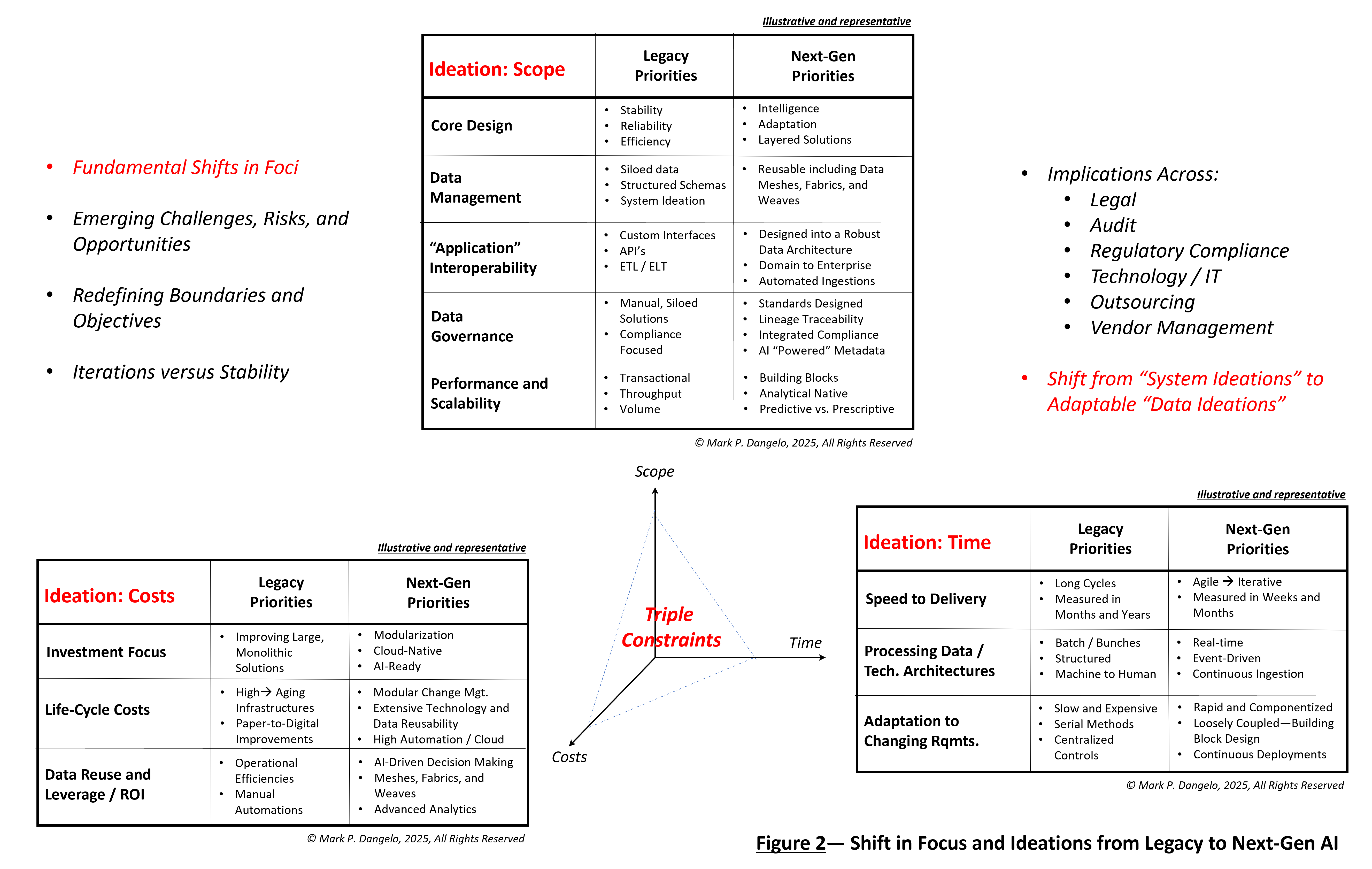

AI discussions today are energetic and promising, especially when solving business demands for efficiency, customer service, profitability, and competitive distinction. Yet there are tradeoffs that must be made when it comes to scope, costs, and time (often referred to as the triple-constraint of program management and budgeting).

After three years, the development and adoption of GenAI solutions is now becoming more common. The legal, compliance, and audit considerations are clearer, and investors from individuals to private equity now conduct due diligence of these solutions to ensure business rule conformity and valuation. Nonetheless, what we are experiencing is that the methods and techniques that used legacy priorities to guide early AI solutions continually show decreased efficacy and importance when it comes to next-gen AI solutions that may possess greater intelligence and shared data.

These shifts, as represented in Figure 2 below, when mapped against an organization’s triple constraints, illustrate distinctive requirements that are not currently accounted for within the enterprise and its cohesive governance designs. In short, the data controls, compliance, and auditability for a small number of emerging AI solutions will not provide the robustness and scalability demanded when agentic AI begins to migrate or replace early-stage AI capabilities.

The diagram elicits another question, who has the roadmaps to migrate AI solutions to next-gen AI solutions? What happens when an AI system is re-trained or retired — what happens to all that data?

By establishing a baseline for the information already provided, we can see from the details in the diagrams several factors, including:

-

-

- The controls and accountability for large numbers of AI systems changes the discussion of data, its architecture, its reuse, and most importantly, its event-driven ingestion which in turn alters AI outputs (model efficacy).

- The mechanisms and oversight employed for traditional passive, sample-driven conformity will fail consistently due to interconnectivity, real-time adaptations, and speed of change.

- The challenges of security, privacy, and ethical data take on new challenges when factoring in agentic AI and its (likely) creation of synthetic data, which in turn is fed back into the system as part of event-driven feedback and continuous improvement.

- Skills and transformative process guardrails will lag agentic AI capabilities. For example, the newest AI chipset performs total calculations in one second what would have taken a human 125 million years.

-

Finding proper agentic AI governance

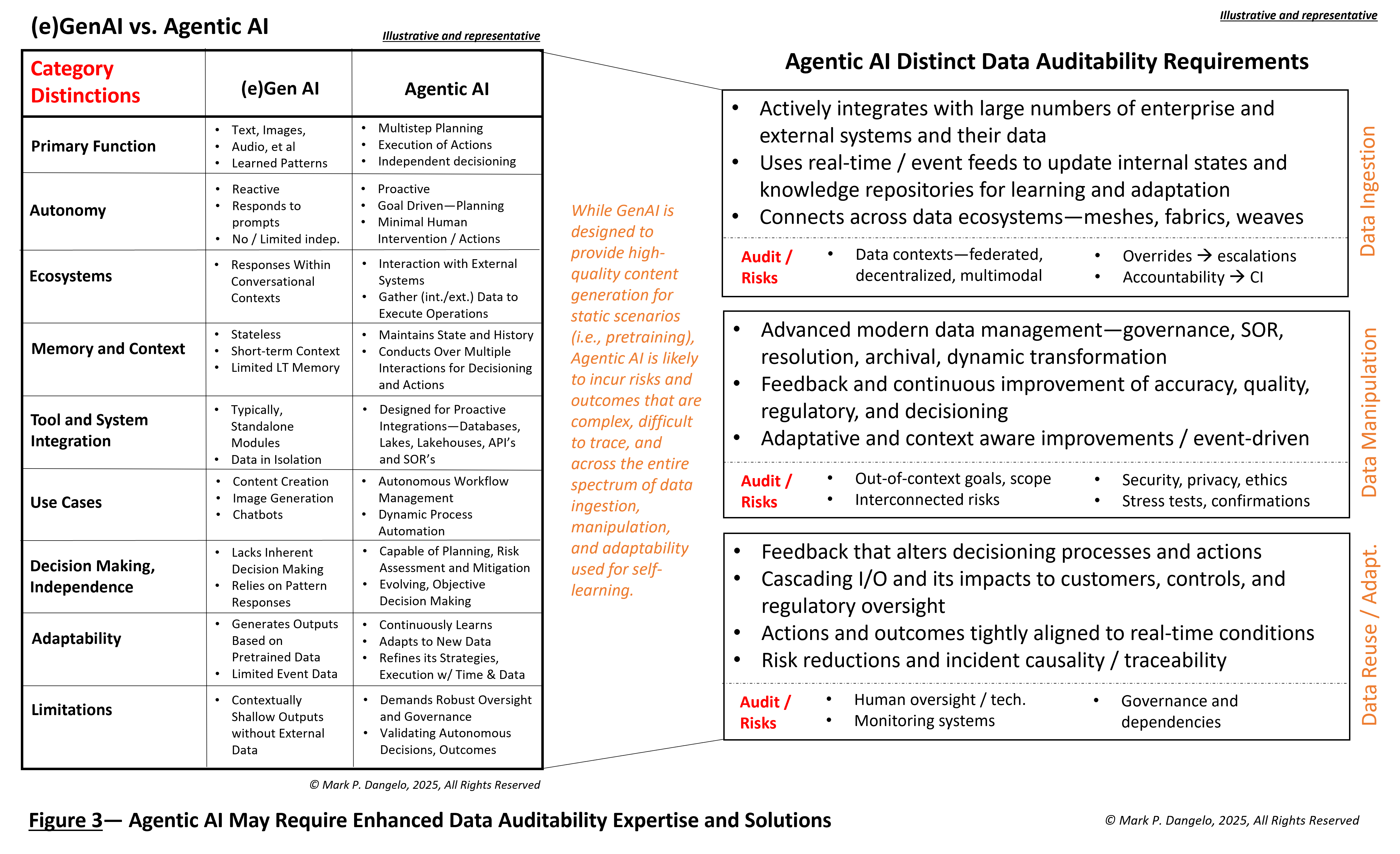

However, beyond the evolution of GenAI, beyond its expansion into RAG, agentic AI can leverage the positive designs underway to aid with its continual refinement and self-learning decision-making. In Figure 3 below, the comparison of these is presented against the new demands being placed on data.

It is in this final illustration that we see a fundamental and permanent shift of priority — data over system ideation. Legacy methods started with the process, and many AI controls today start with algorithms. For agentic AI, there is a phase shift that must start with the data because these thinking, adjusting systems are built not on rules but on goals. Indeed, agentic AI requires accurate, reusable, and auditable data sources.

Corporations and their innovative leaders are experiencing a technological and generational function shift. The traditional legacy control playbooks and prescriptive development approaches are poorly equipped to address the next-gen requirements. Data is the key for explosive algorithmic intelligence that will be increasingly segmented into reusable modular components that are stacked one upon the other.

Finally, the fundamental challenge for every organization and those overseeing AI automation, are the questions: Can we adapt to meet the technological realities? Can we shift the prioritizations and governance to data before siloed, cascading AI risks result in unintended havoc? Will we, as humans in the AI loop chase the AI algorithms, and will we repeat the same mistakes we made with the rapid adoption of financial and regulatory technologies a decade prior?

For agentic AI in 2025 and its impacts on business models and operational performance, oversight will represent a continual journey — not a destination.

You can find more blog posts by this author here